9th annual Augmented World Expo – world’s largest AR and VR conference and expo was held in Santa Clara, California from May, 30 to June, 1. The event gathered 6 000 attendees, 400 speakers and 250 exhibitors, including Unity, HP, NVIDIA, and other eminent technological vendors. Our customer RealityBLU was among them to show the platform BLUairspace developed in collaboration with HQSoftware. Vasil Tarasevich, HQSoftware’s CTO, visited the event as a part of the RealityBLU team.

Stefan Agustsson, M.J. Anderson, and Vasil Tarasevich at AWE 2018

– Vasil, tell us more about the event itself. What caught your attention the most?

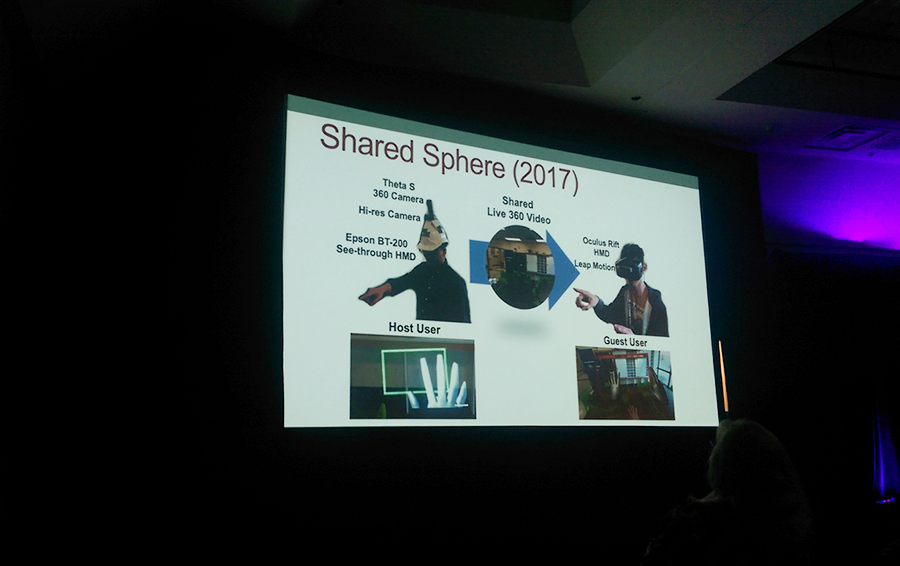

– An important part of the event is knowledge sharing. Industry leaders and smaller companies show what heights they have achieved in the fields of AR hardware and software development, and it is awesome. I have listened to an interesting presentation about AR for communications. The idea is simple. Have you seen the Star Wars movie? There and in other sci-fi movies you can see the technology that allows displaying the hologram of a person communicating from the distance. This is still technically challenging but instead of this, we have a simpler solution. People who want to communicate with a person distantly can wear AR glasses and see the rendering of this person. Here we have a question on how to create this image, but this is still easier than creating a futuristic hologram.

– Is this a camera plus an AR gear solution?

– Yes, it is. In addition to “teleporting” the speaking person, we can also display the objects that the speaker interacts with. We can share a 3D model of a presentation so that both the real and virtual speakers can interact with it. These technologies are being explored now, but close to be applied and used for a valuable purpose. They exist as prototypes.

A presentation “Augmented Teleportation” by mark Billinghurst

– And if we add a suit, we can get haptic feedback as a bonus.

– Yes. And one of the use cases of this communicational AR is remote support and guidance. The main difference between videoconferencing solutions and AR is that during a video conference the camera stands still all the time, unlike AR where we can see what this person sees – he or she moves and streams the image from the camera on the smart glasses, and the observers see this in AR too. In terms of guided remote assistance, a person who maintains a machine of some kind can stream the image of it to a more experienced specialist, who then can show how to interact with the machine in AR. Both sides need to wear AR glasses. And if the glasses support hand recognition, the person who needs help can watch the hands of his assistant manipulate the machine, and just copy the movements.

– Vasil, how would you describe the participants of AWE?

– I can divide them into several groups. The first one is hardware producers, I have seen a lot of AR devices and smart glasses, at least one-third of all participants including Epson, Microsoft HoloLens, Meta, and others. A lot of companies have presented their projects developed for HoloLens. We have talked to Meta representatives, actually, a good partner for our future projects – now they have maybe the most advanced glasses with hand tracking.

In addition to glasses, there were other AR devices: keyboards, earphones. I have talked to the representatives of GAudioLab, the company that creates VR earphones – they came up with a brilliant idea, found their own market niche. Speaking about glasses once again, companies power their devices with hand tracking to facilitate the interaction with the objects. Now people can use controllers, like in gaming consoles, gloves with sensors, and so on. A more progressive way is to embed hand tracking into the glasses via the cameras mounted on the glasses, or other hardware parts – Leap Motion offers a “chip” that can be embedded into nearly any AR headset. The feedback is simple – when you touch something, the object is visually highlighted, you do not feel it physically.

Okay, the next type of the participant is core technology provider – it is computer vision, a set of technologies that address basic AR needs like marker recognition, surface detection. And the others were companies that offered diverse products.

Vuzix industrial smart glasses and Moverio smart glasses stands

– You have visited the event with RealityBLU founders Stefan Agustsson and M.J. Anderson. Tell more about the company itself and what have you all showcased at AWE? What distinguishes your product from the other?

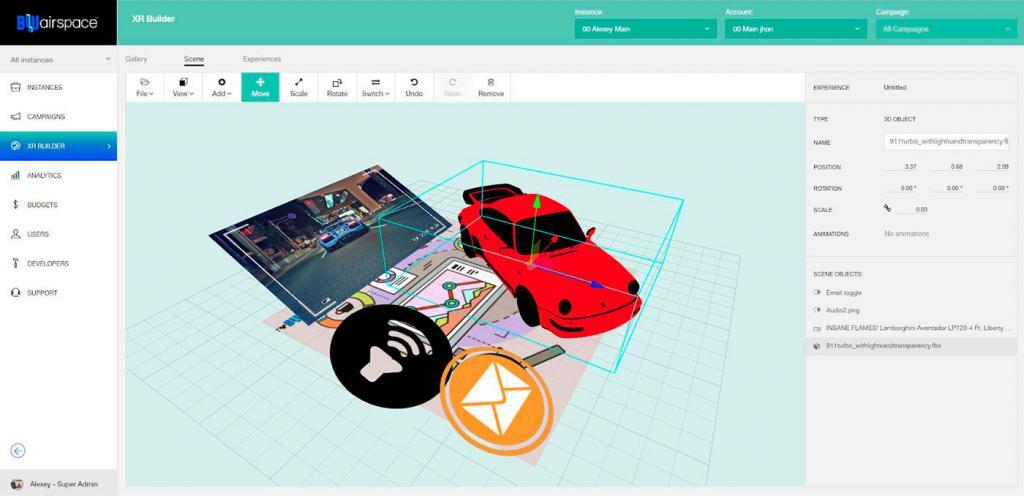

– Our platform solves the problem of commercial use – many companies create AR solutions to show that AR is simple and fun, but how businesses can apply it? This is a higher-level task. RealityBLU offers a complete marketing tool that generates certain business value. Our rivals brought apps that were intentionally simplified. They wanted to show exactly what I described, that AR is simple. In three clicks users can upload a marker, set an image, publish, and everything is ready. BLUairspace has a richer functionality – users can design complex 3D scenes with no special technical skills or knowledge. No rocket science.

BLUairspace XR Builder

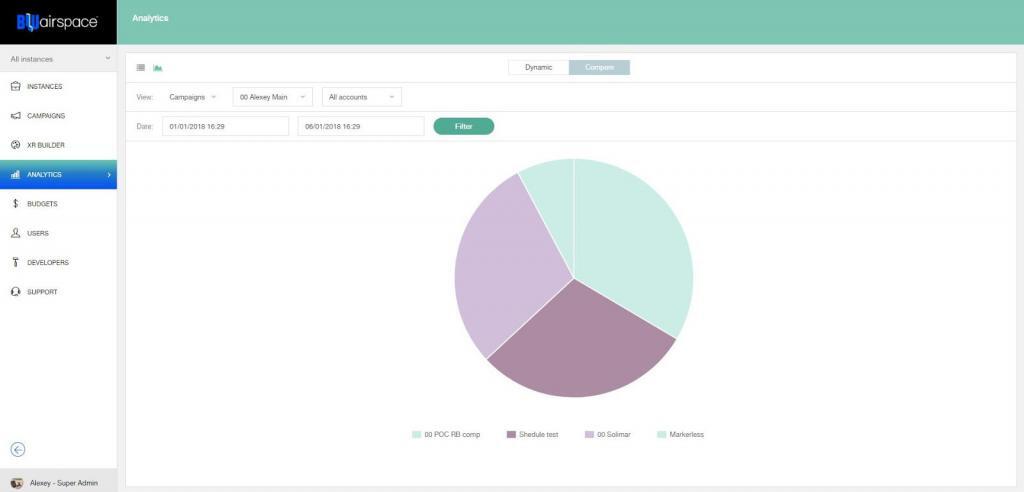

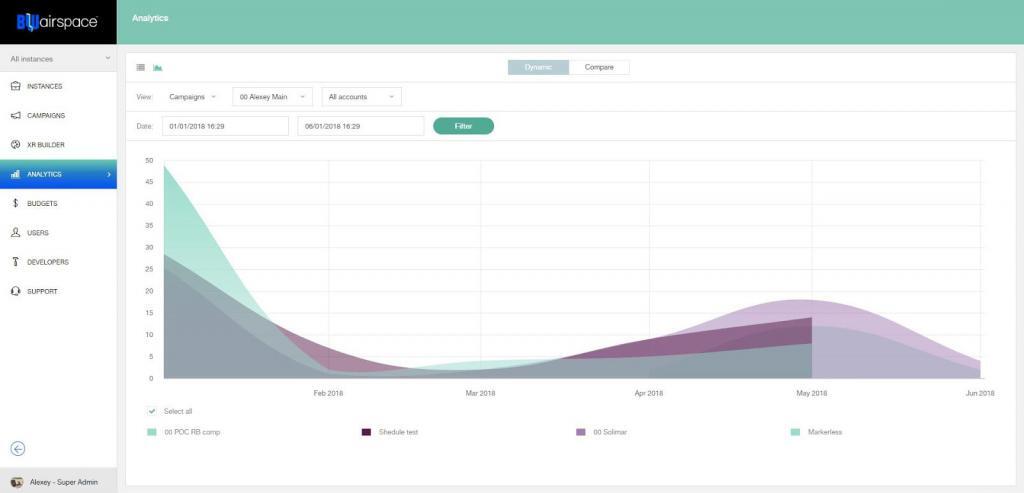

In addition, the platform offers a flexible configuration, ad campaign management tools, geolocation tracking. The app shows different AR experiences depending on the user’s location. For example, a customer wants to place an AR ad of the car salon. When the user scans a marker on a business card of this car dealer, he will see a number of the local dealer, instead of the number of the main office. This opens up certain marketing opportunities like lead tracking and collecting the statistics of calls. We are also adding some analysis tools to extend the marketing capabilities of the app – there are very few AR development companies that came up with the same idea.

BLUairspace analytics

Speaking of marketing tools – AR apps all have the same problem of lack of content. For example, RealityBLU has such a case: its client, a toy manufacturer, advertises its toys via BLUairspace. A child can put a box with a building set in front of the camera. A box has an AR marker on it, the camera processes the marker through the BLUairspace app, and then the kid sees what can be created out of this set in the box – a 3D model is shown on a connected monitor. The customer had all the necessary models, but for some companies, the creation of them is an issue.

Along with these, there were some other typical business use cases that companies offered: remote assistance apps, or interactive instructions, AR learning, and AR visualization. The company I have met creates AR tours. Their customer is building a yacht and wants to see how it looks like from the inside – it is not ready yet, so he takes a virtual tour. The real estate market is one more field where it is possible to apply such solutions to display the inside of the building that is not ready yet.

– Where is AR heading now?

– Now AR is very personal – no one else sees what you see. Pokémon Go was so popular because it was the first social AR experience that has connected such a big amount of people who were seeing the same AR experience and interacting with the same objects. Now I think we are approaching a pretty obvious use case – to create AR objects on the go and share them with a big amount of other users, e.g. add comments to places or objects on the streets. Have you seen Bladerunner 2049? A lot of the technologies you have seen in the movie are present now, they are primitive and simple yet, but it is possible to implement them. Now we start to develop futuristic AR solutions.

Founder

Hey! Welcome to our blog!

The topics we cover include IoT, AR/VR, related news, and our projects.If you’d

like to discuss an article, please

messsage

me on LinkedIn

Related Posts

View All

We are open to seeing your business needs and determining the best solution. Complete this form, and receive a free personalized proposal from your dedicated manager.

Sergei Vardomatski

Founder